Tag: bayes factor

-

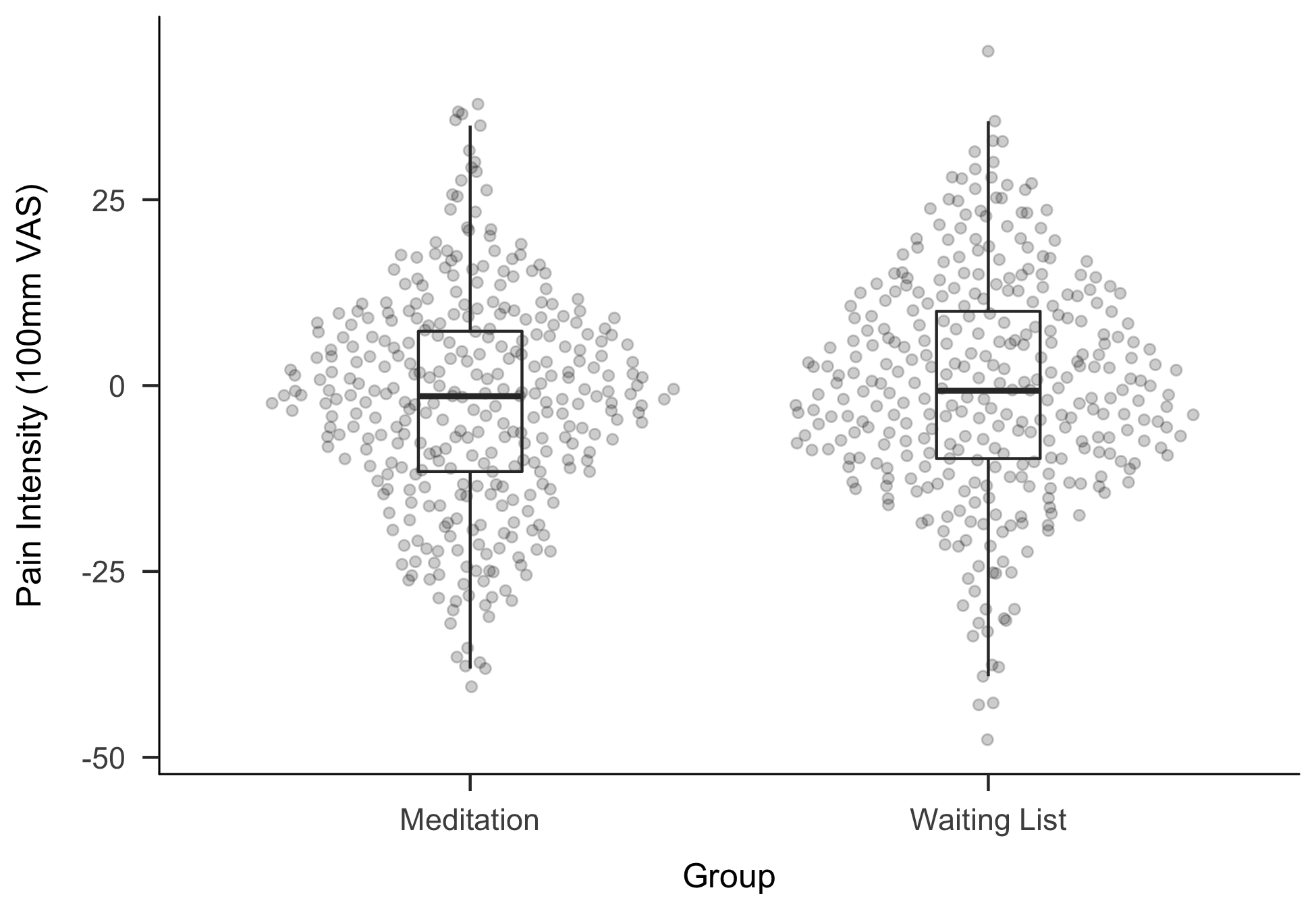

New Preprint: Making “Null Effects” Informative

In February and March this year, I stayed at the Eindhoven Technical University in the amazing group with Daniël Lakens, Anne Scheel and Peder Isager, who are actively researching questions of replicability in psychological science. Over the two months I have learned a lot, exchanged some great ideas with the three of them – and…

-

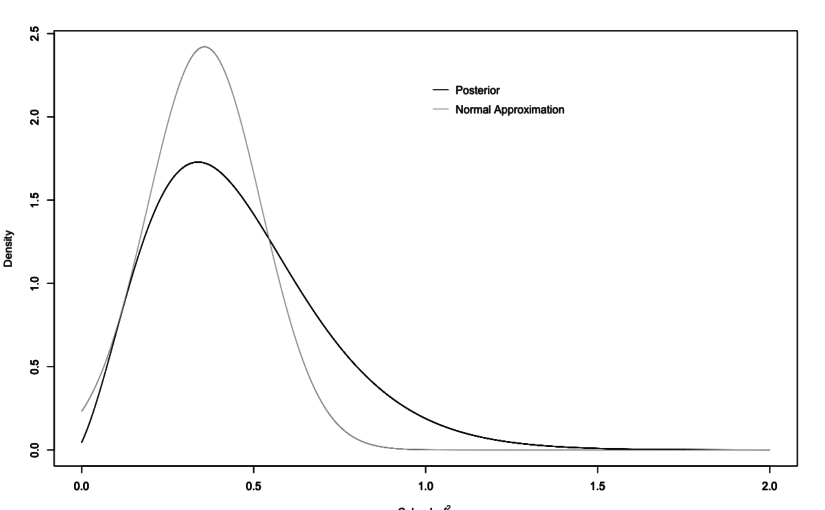

Update on the Replication Bayes Factor

In December I already blogged about the ReplicationBF package, I made available on GitHub. It allows you to calculate Replication Bayes Factors for t- and F-tests. The preprint detailing the formulas for the latter was outdated and the method in the package was not optimal, so I recently updated both.

-

p-hacking destroys everything (not only p-values)

In the context of problems with replicability in psychology and other empirical fields, statistical significance testing and p-values have received a lot of criticism. And without question: much of the criticism has its merits. There certainly are problems with how significance tests are used and p-values are interpreted.1 However, when we are talking about “p-hacking”,…

-

ReplicationBF: An R-Package to calculate Replication Bayes Factors

Some months ago I’ve written a manuscript how to calculate Replication Bayes factors for replication studies involving F-tests as is usually the case for ANOVA-type studies. After a first round of peer review, I have revised the manuscript and updated all the R scripts. I have a written a small R-Package to have all functions…

-

New Preprint: A Bayes Factor for Replications of ANOVA Results

Already some weeks ago I have finished up some thoughts for a Replication Bayes factor for ANOVA contexts, which resulted in a manuscript that is available as pre-print at arXiv. The theoretical foundation was laid out before by Verhagen & Wagenmakers (2014) and my manuscript is mainly an extension of their approach. We have another paper…

-

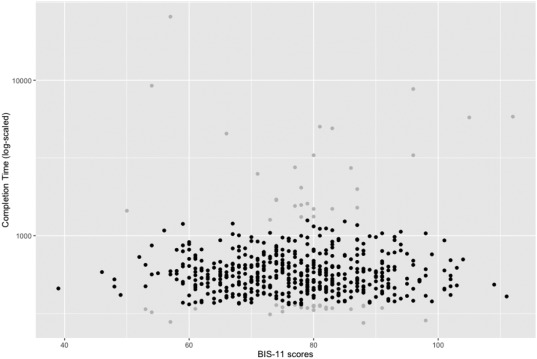

New Paper: Impulsivity and Completion Time in Online Questionnaires

I’ve got my first first-author-paper published in Personality and Individual Differences. The paper is titled “Reliability and completion speed in online questionnaires under consideration of personality” (doi:10.1016/j.paid.2017.02.015) and was written together with Lina and Christian.

-

ASA statement on p-Values: Improving valid statistical reasoning

A lot of debate (and part of my thesis) revolve around replicability and the proper use of inferential methods. The American Statistical Association has now published a statement on the use and the interpretation of p-Values (freely available, yay). It includes six principles and how to handle p-Values. None of them are new in a theoretical…